AI Governance

- The use of AI and machine learning (ML) has gained momentum as organizations evaluate the potential applications of AI to enhance the customer experience, improve operational efficiencies, and automate business processes.

- Growing applications of AI have reinforced concerns about ethical, fair, and responsible use of the technology that assists or replaces human decision making.

Our Advice

Critical Insight

- Implementing AI systems requires careful management of the AI lifecycle, governing data, and machine learning model to prevent unintentional outcomes not only to an organization’s brand reputation but, more importantly, to workers, individuals, and society.

- When adopting AI, it is important to have a strong ethical and risk management framework surrounding its use.

Impact and Result

- AI governance enables management, monitoring, and control of all AI activities within an organization.

AI Governance Research & Tools

Besides the small introduction, subscribers and consulting clients within this management domain have access to:

1. AI Governance Deck – A framework for building responsible, ethical, fair, and transparent AI.

Create the foundation that enables management, monitoring, and control of all AI activities within the organization. The AI governance framework will allow you to define an AI risk management approach and defines methodology for managing and monitoring the AI/ML models in production.

- AI Governance Storyboard

Further reading

AI Governance

A Framework for Building Responsible, Ethical, Fair, and Transparent AI

Are you ready for AI?

Business leaders must manage the associated risks as they scale their use of AI

| In recent years, following technological breakthroughs and advances in development of machine learning (ML) models and management of large volumes of data, organizations are scaling their use of artificial intelligence (AI) technologies.

The use of AI and ML has gained momentum as organizations evaluate the potential applications of AI to enhance the customer experience, improve operational efficiencies, and automate business processes. Growing applications of AI have reinforced concerns about ethical, fair, and responsible use of the technology that assists or replaces human decision-making. Implementing AI systems requires careful management of the AI lifecycle, governing data, and machine learning model to prevent unintentional outcomes not only to an organization’s brand reputation but also, more importantly, to workers, individuals, and society. When adopting AI, it is important to have strong ethical and risk management frameworks surrounding its use. |

“Responsible AI is the practice of designing, building and deploying AI in a manner that empowers people and businesses, and fairly impacts customers and society – allowing companies to engender trust and scale AI with confidence.” (World Economic Forum) |

Regulations and risk assessment tools

Governments around the world are developing AI assessment methodologies and legislation for AI. Here are a couple of examples:

- Responsible use of artificial intelligence (AI) guiding principles (Canada):

- understand and measure the impact of using AI by developing and sharing tools and approaches

- be transparent about how and when we are using AI, starting with a clear user need and public benefit

- provide meaningful explanations about AI decision-making, while also offering opportunities to review results and challenge these decisions

- be as open as we can by sharing source code, training data, and other relevant information, all while protecting personal information, system integration, and national security and defense

- provide sufficient training so that government employees developing and using AI solutions have the responsible design, function, and implementation skills needed to make AI-based public services better

- The Algorithmic Impact Assessment tool (Canada) is used to determine the impact level of an automated decision-system. It defines 48 risk and 33 mitigation questions. Assessment scores consider factors such as systems design, algorithm, decision type, impact, and data.

- The National AI Initiative Act of 2020 (DIVISION E, SEC. 5001) (US) became law on January 1, 2021. This is a program across the entire Federal government to accelerate AI research and application.

- Bill C-27, Artificial Intelligence and Data Act (AIDA) (Canada), when passed, would be the first law in Canada regulating the use of artificial intelligence systems.

- The EU Artificial Intelligence Act (EU) assigns applications of AI to three risk categories: applications and systems that create an unacceptable risk, such as government-run social scoring; high-risk applications, such as a CV-scanning tool that ranks job applicants; and lastly, applications not explicitly listed as high-risk.

- The FEAT Principles Assessment Methodology was created by the Monetary Authority of Singapore (MAS) in collaboration with other 27 industry partners for financial institutions to promote fairness, ethics, accountability, and transparency (FEAT) in the use of artificial intelligence and data analytics (AIDA).

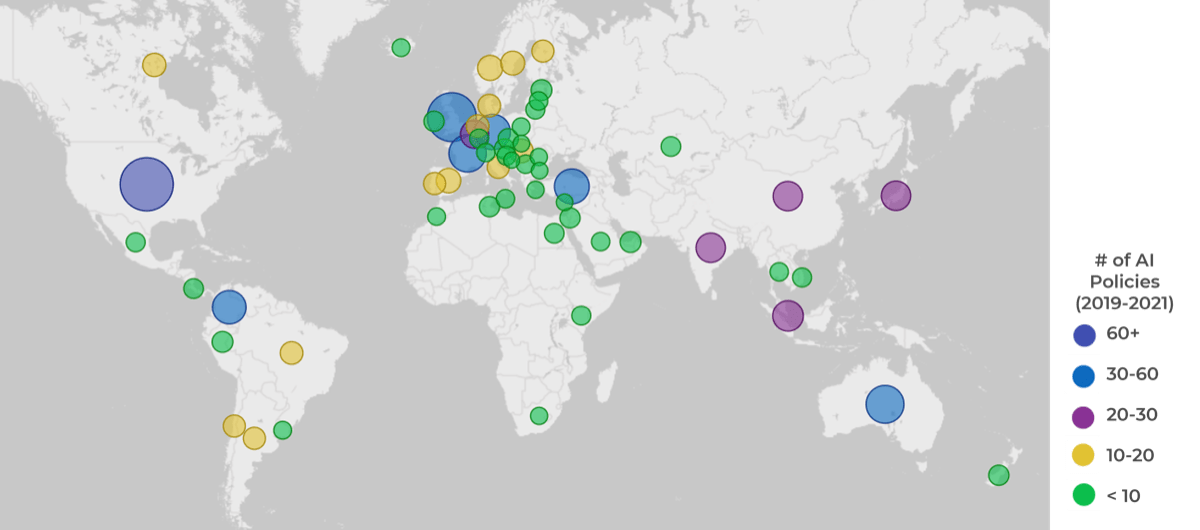

AI policies around the world

Source of data: OECD.AI (2021), powered by EC/OECD (2021), database of national AI policies, accessed on 7/09/2022, https://oecd.ai.

The need for AI governance

“To adopt AI, organizations will need to review and enhance their processes and governance frameworks to address new and evolving risks.” (Canadian RegTech Association, Safeguarding AI Use Through Human-Centric Design, 2020)

| To ensure responsible, transparent, and ethical AI systems, organizations will need to review existing risk control frameworks and update them to include AI risk management and impact assessment frameworks and processes.

As ML and AI technologies are constantly evolving, the AI governance and AI risk management frameworks will need to evolve to ensure the appropriate safeguards and controls are in place. This applies not only to the machine learning models and AI system custom built by the organization’s data science and AI team, but it also includes AI-powered vendor tools and technologies. The vendors should be able to explain how AI is used in their products, how the model was trained, and what data was used to train the model. AI governance enables management, monitoring, and control of all AI activities within an organization. |

|

Key concepts

Info-Tech Research Group defines the key terms used in this document as follows:

Machine learning systems learn from experience and without explicit instructions. They learn patterns from data, then analyze and make predictions based on past behavior and the patterns learned.

Artificial intelligence is a combination of technologies and can include machine learning. AI systems perform tasks that mimic human intelligence, such as learning from experience and problem solving. Most importantly, AI makes its own decisions without human intervention.

We use the definition of data ethics by Open Data Institute: “Data ethics is a branch of ethics that considers the impact of data practices on people, society and the environment. The purpose of data ethics is to guide the values and conduct of data practitioners in data collection, sharing and use.”

Algorithmic or machine bias is systematic and repeatable errors in a computer system that create unfair outcomes, such as privileging one arbitrary group of users over others. Algorithmic bias is not a technical problem. It’s a social and political problem, and in the context of implementing AI for business benefits, it’s a business problem.

Download the blueprint Mitigate Machine Bias blueprint for detailed discussion on bias, fairness, and transparency in AI systems

Key concepts – explainable, transparent and trustworthy

| “Responsible AI is the practice of designing, building and deploying AI in a manner that empowers people and businesses and fairly impacts customers and society – allowing companies to engender trust and scale AI with confidence” (CIFAR).

The AI system is considered trustworthy when people understand how the technology works and when we can assess that it’s safe and reliable. We must be able to trust the output of the system and understand how the system was designed, what data was used to train it, and how it was implemented. Explainable AI, sometimes abbreviated as XAI, refers to the ability to explain how an AI model makes predictions, its anticipated impact, and its potential biases. Transparency means communicating with and empowering users by sharing information internally and with external stakeholders, including beneficiaries and people impacted by the AI-powered product or service. |

68% [of Canadians] are concerned they don’t understand the technology well enough to know the risks. 77% say they are concerned about the risks AI poses to society (TD, 2019) |

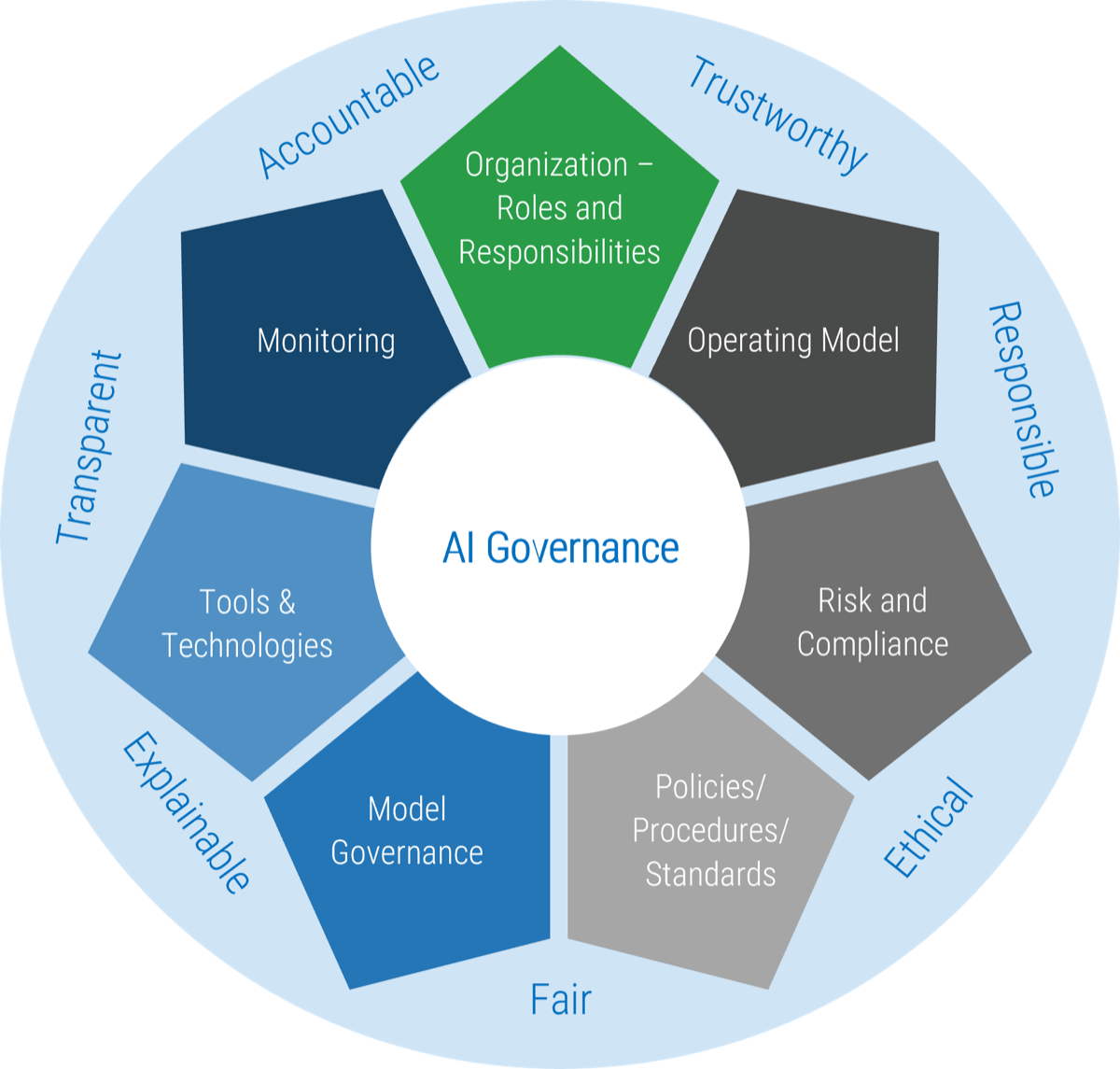

AI Governance Framework

|

Monitoring

Tools & Technologies

Model Governance

|

|

Organization

Structure, roles, and responsibilities of the AI governance organization Operating Model

Risk and Compliance

Policies and procedures to support implementation of AI governance |

Buying Options

AI Governance

Client rating

Cost Savings

Days Saved

IT Risk Management · IT Leadership & Strategy implementation · Operational Management · Service Delivery · Organizational Management · Process Improvements · ITIL, CORM, Agile · Cost Control · Business Process Analysis · Technology Development · Project Implementation · International Coordination · In & Outsourcing · Customer Care · Multilingual: Dutch, English, French, German, Japanese · Entrepreneur

Tymans Group is a brand by Gert Taeymans BV

Gert Taeymans bv

Europe: Koning Albertstraat 136, 2070 Burcht, Belgium — VAT No: BE0685.974.694 — phone: +32 (0) 468.142.754

USA: 4023 KENNETT PIKE, SUITE 751, GREENVILLE, DE 19807 — Phone: 1-917-473-8669

Copyright 2017-2022 Gert Taeymans BV